Experimentation That Scales

Across Your Entire Portfolio

Governance frameworks, parallel execution across brands, and institutional knowledge systems — for organizations where experimentation must survive organizational complexity, not just produce isolated wins.

DRIP Agency architects enterprise experimentation programs for multi-brand e-commerce organizations with €50M+ in revenue. We replace fragmented testing efforts with centralized infrastructure: governance protocols, frequentist statistical standards aligned with Georgi Georgiev's methodology, cross-brand knowledge transfer, and executive reporting that quantifies experimentation maturity. Our programs span 4,000+ experiments across 250+ client projects, generating €500M+ in measured revenue impact. Enterprise clients like Coop run structured testing across 10 e-commerce brands simultaneously through our frameworks, while organizations like Giesswein have generated €12.2M in additional revenue over three years of sustained experimentation.

Why Enterprise Experimentation Programs Stall

Your first brand ran A/B tests successfully. The board noticed. Now leadership wants experimentation across the portfolio — every brand, every market, every channel. But what worked with one team and one storefront collapses at organizational scale.

The failure mode is always the same: each brand builds its own testing program, its own methodology, its own analytics interpretation. Six months in, you have five brands running experiments with no shared learnings, no comparable metrics, and no way for leadership to evaluate whether experimentation is actually compounding value or just generating activity.

The real cost is not inefficiency. It is the institutional knowledge that never accumulates. Every brand repeats the same mistakes, tests the same obvious hypotheses, and learns nothing from the portfolio's collective intelligence.

- Siloed testing programs — each brand reinvents methodology with no shared standards

- No governance framework, so test quality and statistical rigor vary wildly across teams

- Winning insights from one market are never systematically replicated in others

- Tool sprawl: different platforms, analytics setups, and reporting structures per brand

- Learnings do not compound — there is no institutional memory across the portfolio

- Leadership cannot distinguish testing activity from genuine experimentation maturity

Enterprise experimentation is not a larger version of single-brand CRO. It requires purpose-built infrastructure for governance, cross-brand knowledge management, and executive accountability.

How We Build Enterprise Experimentation Programs

We treat experimentation as an organizational capability, not a collection of brand-level projects. The program architecture ensures that every experiment — across every brand — contributes to a compounding knowledge base while maintaining the statistical rigor that makes results trustworthy.

1. Portfolio Experimentation Audit

We audit the current state of testing across your portfolio: tools, processes, statistical methods, team capabilities, analytics infrastructure, and decision-making workflows. This produces a maturity assessment for each brand and a gap analysis against what structured enterprise experimentation requires. Most organizations discover that what they call 'experimentation' is actually disconnected A/B testing with inconsistent methodology.

2. Governance Framework Design

From the audit, we build the governance layer: standardized hypothesis templates tied to psychological drivers, approval and prioritization workflows, QA protocols for cross-browser and cross-device validation, frequentist statistical standards with predetermined sample sizes, and result documentation requirements. This framework applies across all brands while allowing brand-specific customization where justified. It eliminates the inconsistency that makes cross-brand comparison impossible.

3. Parallel Execution Across Brands

With governance in place, we launch structured testing across brands in staged waves. Each brand receives a tailored research phase — customer psychology profiling using our 7 Psychological Drivers framework, funnel analysis, and Category Entry Point identification — but within the shared methodology. Winning hypotheses from one brand feed directly into testing queues for adjacent brands. A product page insight proven at one fashion brand can be adapted and tested across three others within weeks.

4. Cross-Brand Knowledge Transfer

Every experiment — win, loss, or inconclusive — is documented in our Research Hub with structured metadata: hypothesis, audience segment, page type, psychological driver, statistical outcome, and revenue impact. With 1,724 qualitative analyses in the system, this becomes the organization's experimentation memory. The system enables pattern recognition across brands: which psychological drivers dominate in which categories, which elements carry the highest revenue sensitivity, and where cross-brand learnings transfer reliably.

5. Executive Reporting & Program Scaling

We deliver portfolio-level dashboards that give VP and C-level stakeholders visibility into experimentation maturity across every brand: velocity, win rate, cumulative revenue impact, knowledge transfer rate, and backlog depth. This transforms experimentation from a tactical activity into a board-level strategic capability with quantifiable ROI. As the program matures, we expand scope — new brands, new markets, new channels — while the governance layer ensures quality does not degrade with scale.

The result is an organization that builds institutional knowledge from every experiment and deploys that knowledge across its entire portfolio at increasing velocity — not just running tests, but compounding what it learns.

Numbers From the Field

Across 4,000+ experiments, our programs deliver a 36.3% win rate — reflecting the frequentist discipline of testing genuine hypotheses rather than inflating results with weak tests or premature stopping. This is the rate at which experiments produce statistically significant, positive revenue outcomes.

Winning experiments produce an average +4.15% uplift in revenue per visitor. Compounded across a multi-brand portfolio running parallel tests, this translates to material incremental revenue each quarter without additional traffic acquisition spend.

Our median test duration of 42 days reflects the statistical patience required for trustworthy results. We do not call tests early, do not use sequential stopping rules without pre-specification, and do not accept directional results as evidence. Enterprise programs require this rigor because decisions propagate across the entire portfolio.

Results That Speak for Themselves

Giesswein

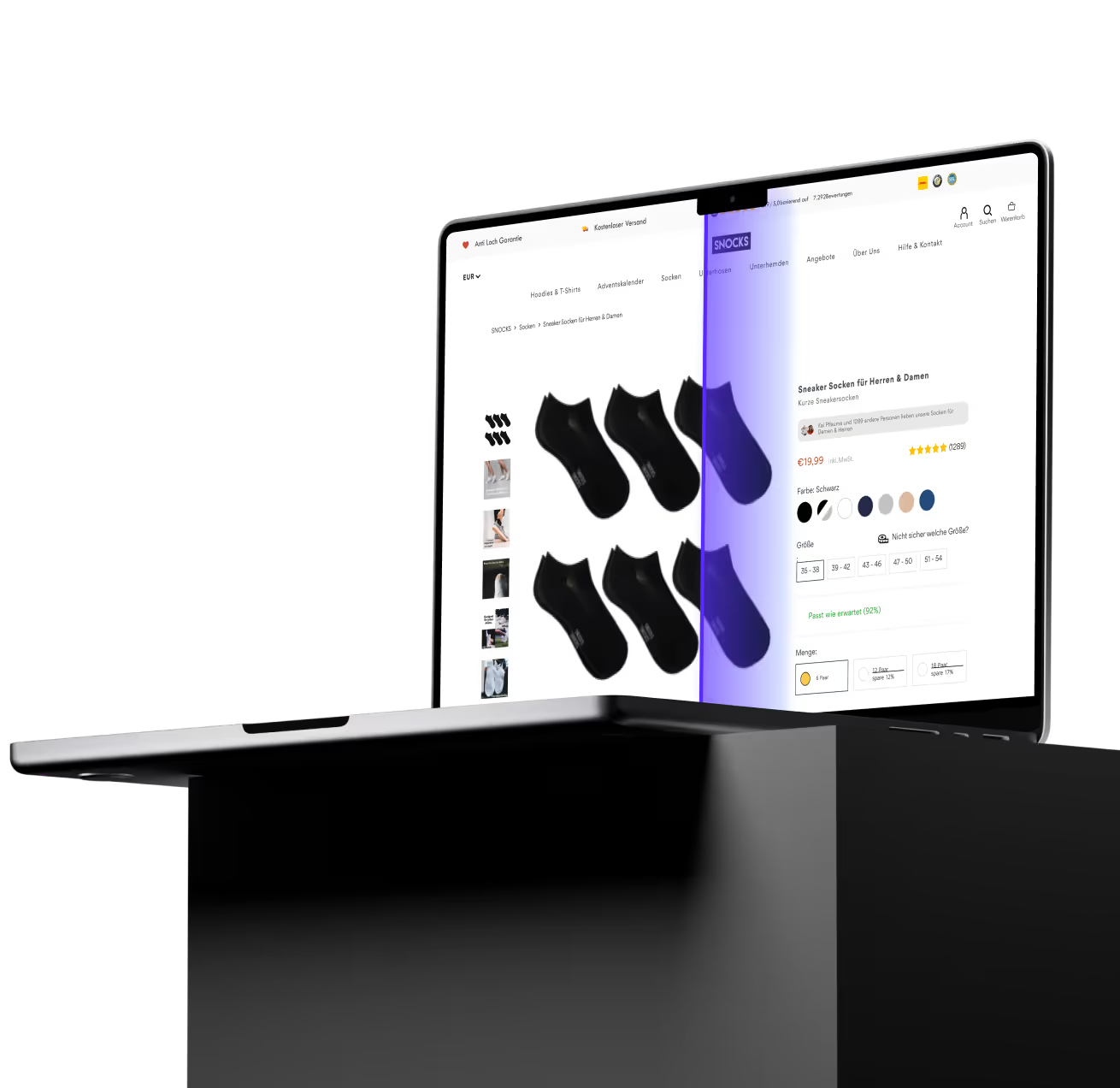

SNOCKS

Go Deeper

Conversion Optimization License

The single-brand CRO program that forms the foundation of our enterprise experimentation infrastructure.

Our Process

How the 7 Psychological Drivers framework and frequentist methodology work at the individual brand level.

Case Studies

Detailed results from enterprise and single-brand experimentation programs across 250+ projects.

Build an Enterprise Experimentation Engine

If your organization runs multiple e-commerce brands and you are ready to turn experimentation from scattered activity into a compounding strategic capability — let us architect the program together.

The Newsletter Read by Employees from Brands like

Common Questions

The fundamental difference is infrastructure. Separate CRO programs for each brand mean separate methodologies, separate knowledge bases, and separate reporting — which makes cross-portfolio learning impossible. An enterprise program provides shared governance that standardizes test quality, centralized knowledge management through our Research Hub (1,724 qualitative analyses and counting), unified frequentist statistical standards that enable comparable results across brands, and coordinated resource allocation that eliminates duplicated effort. Without this layer, each brand reinvents the same hypotheses independently. With it, a winning insight at one brand feeds testing queues across the portfolio within days.

We use frequentist methodology aligned with the work of Georgi Georgiev — predetermined sample sizes, fixed-horizon tests, and strict significance thresholds. This matters at enterprise scale because results from one brand inform decisions across the entire portfolio. If the underlying methodology is unsound — sequential testing without proper correction, Bayesian approaches with poorly specified priors, or premature test stopping — errors propagate across every brand that acts on those results. Our 42-day median test duration reflects this discipline. We do not trade statistical integrity for speed.

Our experimentation infrastructure is platform-agnostic. Testing tools integrate at the code level across Shopify, Shopify Plus, WooCommerce, Magento, custom builds, and enterprise platforms. The governance framework and Research Hub sit above the platform layer, so a standardized experiment workflow applies regardless of whether Brand A runs on Shopify Plus and Brand B runs on a custom headless stack. Our QA team validates every test across real devices before launch, regardless of the underlying technology.

The initial program architecture — portfolio audit, governance framework design, and infrastructure setup — takes 4 to 8 weeks depending on portfolio size and complexity. The first brand begins testing by the end of this phase, with additional brands onboarded in staged waves. Most multi-brand organizations reach full portfolio coverage within 3 to 6 months. The knowledge management system begins delivering cross-brand insights after approximately 50 to 100 experiments across the portfolio, which enterprise programs typically reach within the first 4 to 6 months. Coop moved from zero structured experimentation to a unified program across 10 formats within this timeline.

ROI is measured at three levels. Direct revenue impact: the cumulative additional revenue from winning experiments across the portfolio, measured through controlled tests with frequentist significance standards. Velocity efficiency: how many experiments the organization runs per quarter compared to the pre-program baseline. Knowledge leverage: the rate at which winning insights from one brand are successfully transferred and validated across others, reducing the marginal cost of each incremental experiment. We provide monthly executive reporting across all three dimensions with portfolio-level dashboards that give leadership visibility into experimentation maturity across every brand.

Yes, and this is one of the most common engagement models for enterprise clients. DRIP operates as the center of excellence — providing governance frameworks, statistical methodology, Research Hub infrastructure, and strategic oversight — while internal teams handle day-to-day execution within their respective brands. We train internal teams on governance standards, QA protocols, and documentation requirements, then provide ongoing quality assurance and cross-brand coordination. Over time, many organizations internalize more execution capability while retaining DRIP for strategic direction and the cross-brand knowledge layer that is difficult to replicate internally.