A/B Testing That Compounds

Revenue Quarter Over Quarter

DRIP runs structured experimentation programs for e-commerce brands — grounded in consumer psychology, executed through parallel testing, and held to frequentist statistical standards.

DRIP Agency is a specialized A/B testing agency for e-commerce brands. We design and run structured experimentation programs that compound revenue growth quarter over quarter. Every test hypothesis starts with consumer psychology research — not gut instinct. We run multiple tests simultaneously using our parallel testing protocol, evaluate results with frequentist statistics (no peeking, no Bayesian shortcuts), and feed learnings back into a self-improving prioritization engine. The result: a testing program that gets smarter and more profitable the longer it runs.

Why Most A/B Testing Programs Fail to Move the Needle

Brands invest in A/B testing expecting compounding revenue growth. Instead, they get a trickle of inconclusive results, tests that run too short, and a roadmap driven by opinion rather than evidence. The testing tool works fine — the program around it is the problem.

This is what we see repeatedly across brands that come to us after trying to build testing in-house or working with generalist agencies:

- Tests are based on 'best practices' and competitor copying rather than actual customer research — so the hit rate stays below 20%

- Tests end too early because someone wants to ship the winner — leading to false positives that erode trust in the entire program

- Only one test runs at a time, creating a bottleneck that limits learning velocity to 2-3 tests per month

- No systematic learning loop — each test exists in isolation, and the team keeps re-testing the same surface-level ideas

- Results are reported in conversion rate lifts without connecting to actual revenue impact, making it impossible to justify continued investment

The gap is not in tooling. It is in methodology: how hypotheses are generated, how tests are structured, how results are evaluated, and how learnings compound over time. That is the problem a proper A/B testing agency solves.

How DRIP's Testing Program Works

Our approach is built around four interconnected systems. Each one addresses a specific failure mode in traditional A/B testing programs — and together, they create a compounding engine that accelerates over time.

1. Psychology-Led Research

Every test hypothesis starts with customer psychology research, not guesswork. We map your audience's decision drivers using our 7 Psychological Drivers framework — Progress, Curiosity, Security, Status, Autonomy, Comfort, and Belonging — combined with Category Entry Point analysis. This means hypotheses are grounded in how your customers actually think and buy, which is why our win rate sits at 36.3% versus the industry average of roughly 20%.

2. Parallel Testing Protocol

We run 6-10 experiments simultaneously using our parallel testing infrastructure. Each test is isolated to prevent interaction effects, while the program as a whole maximizes learning velocity. Where sequential testing delivers 20-30 tests per year, parallel testing delivers 80-100+ — compressing years of learning into months.

3. Statistical Rigor

We are strictly frequentist, aligned with the statistical standards set by practitioners like Georgi Georgiev. Every test runs to full sample size with predetermined stopping rules. No peeking. No early stopping. No Bayesian posterior probabilities presented as certainties. We use fixed-horizon hypothesis testing, sequential testing with alpha spending where appropriate, and always report confidence intervals alongside p-values.

4. Compounding Learning System

Every test result — win, loss, or inconclusive — feeds back into our Research Hub, a proprietary knowledge base built on 4,000+ experiments across 250+ client projects. This creates a self-improving system: the more we test, the better our hypotheses become, the higher the win rate climbs, and the faster revenue compounds. Brands in their second year with us consistently outperform their first.

This is not a testing-as-a-service bolt-on. It is a structured experimentation program designed to become the highest-ROI growth channel in your marketing mix.

Numbers From the Field

Across 4,000+ controlled experiments in our database.

Revenue per visitor uplift on winning tests.

Proper runtime prevents false positives and ensures statistical rigor.

Results That Speak for Themselves

KoRo

Kickz

Go Deeper

Ready to Run Experiments That Actually Move Revenue?

Book a strategy call to discuss how DRIP's testing program can compound growth for your e-commerce brand.

The Newsletter Read by Employees from Brands like

Common Questions

DRIP Agency is built specifically for e-commerce experimentation at scale. Three things distinguish our approach: First, every hypothesis originates from consumer psychology research using our 7 Psychological Drivers framework — not from best-practice lists or competitor copying. Second, we run 6-10 experiments simultaneously through our parallel testing protocol, delivering 80-100+ tests per year compared to the 20-30 that sequential testing allows. Third, we are strictly frequentist in our statistical methodology — no Bayesian shortcuts, no early stopping, no peeking at results before the predetermined sample size is reached. This combination produces a 36.3% win rate across 4,000+ experiments, roughly double the industry benchmark.

DRIP Agency works with all major A/B testing platforms including AB Tasty, VWO, Optimizely, and Convert. The tool matters far less than the methodology. Most testing programs fail not because of the platform, but because of weak hypothesis generation, insufficient sample sizes, premature test stopping, and a lack of systematic learning. We select the platform that fits your tech stack and traffic volume, then build the research, statistical, and learning infrastructure that makes the tool productive.

DRIP's parallel testing protocol typically delivers 8-12 new experiments per month, with 6-10 running simultaneously at any given time. Over a 12-month engagement, this compounds to 80-100+ experiments — each building on the learnings of those before it. The exact cadence depends on your site traffic and the number of testable pages, which we assess during the initial research phase.

We evaluate every experiment using frequentist hypothesis testing with fixed-horizon designs. Primary metrics are revenue per visitor (RPV) and conversion rate, always reported with confidence intervals and statistical significance thresholds set at 95% minimum. We do not use Bayesian posterior probabilities, and we never stop tests early based on interim results. Beyond individual test outcomes, we track portfolio-level metrics: cumulative revenue impact, win rate trends, and learning velocity across the entire experimentation program.

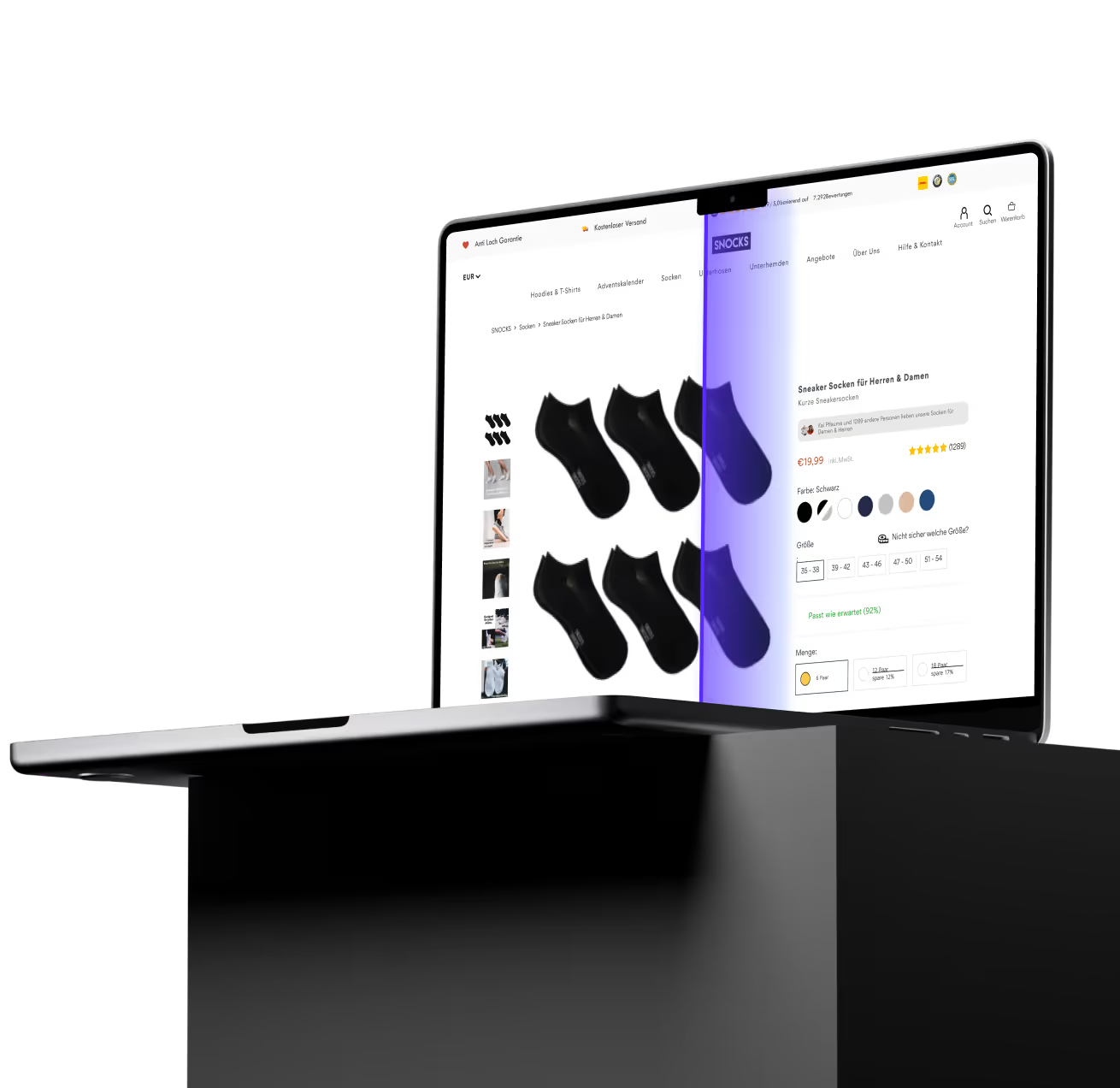

DRIP Agency's experimentation programs require sufficient traffic to reach statistical significance within reasonable timeframes. As a general guideline, brands need at least 50,000 monthly sessions on their primary conversion pages. Our portfolio includes DTC brands like SNOCKS and KoRo, multi-brand retailers like Kickz, and enterprise e-commerce operations across fashion, food, health, sports, and home goods — typically between €5M and €500M+ in annual revenue.

DRIP's core offering is the Conversion Optimization License — a 6-month structured experimentation program that includes psychology-led research, parallel A/B testing, statistical analysis, and compounding learning. For brands that want to evaluate fit before committing, we offer a standalone CRO Audit that delivers a prioritized testing roadmap. The audit investment is credited toward the full license if you proceed. We do not offer ad-hoc test execution without the underlying research methodology, as isolated tests without a systematic learning framework consistently underperform.